Robo-journalism before and after ChatGPT

The news industry has long been “collaborating” with writing algorithms. Generative AI has just made it visible

On March 9, 2026, The New York Times launched the quiz “Who’s a better writer: AI or humans?” Readers were invited to choose the better-written sample in five pairs of texts across different genres. One day later, after 86,000 people had taken the quiz, the result was impressive—or confusing, depending on where you stand on AI–human replacement. 54% preferred the samples written by AI.

The result, of course, raises a troubling question about the future of journalism. However much digital folklore mocks AI and its ability to write or create, we miss what really matters. It is not the current results that matter, but the current dynamics. Judge the potential of AI writing or creativity not by the results achieved in the three years since it appeared, but by the dynamics AI has shown in such a short time.

Indeed, ChatGPT has been in mass use for just over three years, and other LLMs for even less. In that short time, they have taken over a large share of content and creative production that used to be done by humans, and now users are beginning to prefer it—and this is in The New York Times, of all places. Imagine the awkward takeaway: “54% of readers in The New York Times preferred AI-written texts to human-written.”

However awkward or imperfect AI content may sometimes seem, content production is moving in that direction. And now users are signaling that they do not mind and sometimes even prefer AI.

But the alarm comes a bit late. In fact, the takeover began quietly more than a decade ago. The first contest between a bio-journalist and a robo-journalist happened in 2015, when NPR’s Scott Horsley competed with the WordSmith algorithm to write a financial report. That was seven years before ChatGPT. In 2014, WordSmith alone, one of the two leading writing algorithms at the time, produced 1 billion news stories—likely more than all human journalists combined that year. In 2013, probably the first university study comparing robo-journalistic and bio-journalistic writing was conducted in Sweden—and the results were inconclusive. That was the time to worry about AI taking over journalism, not now.

I wrote about robo-journalists in 2016, covering real stories of how writing algorithms were put to work in newsrooms. For some reason, they were little known even in the industry, despite already being widely used at the time.

Awakened now by the obvious threat from AI, many still dismiss the trend with outdated claims that AI cannot produce real creativity. But that is not the point. The point is that AI works faster and cheaper, has no complaints or unions, and readers do not seem to mind. If AI can deliver comparable quality at a much lower cost, why keep humans? Except for the love of humans, of course. That’s what The New York Times quiz unwillingly unveiled.

Anyway, I think the NYT AI–human quiz is a good hook to revisit the true and much longer story of robo-journalism that dates back long before ChatGPT. So here is my 2016 essay, “Robo-journalism: the third threat,” which traces the early competition between human journalists and proto-AI in journalism (slightly abridged[1]).

Robo-journalism: the third threat

The first contest between cyber journalists and bio-journalists ended in a tie.—Three threats to journalism.—News stories about earthquakes and tectonic shifts.—Generative journalism.—Two arguments about “robots’ incapability.”—Road map for robot journalism.—Forecasts and suggestions. (Published in 2016, revised).

The first contest between cyber journalists and bio-journalists ended in a tie

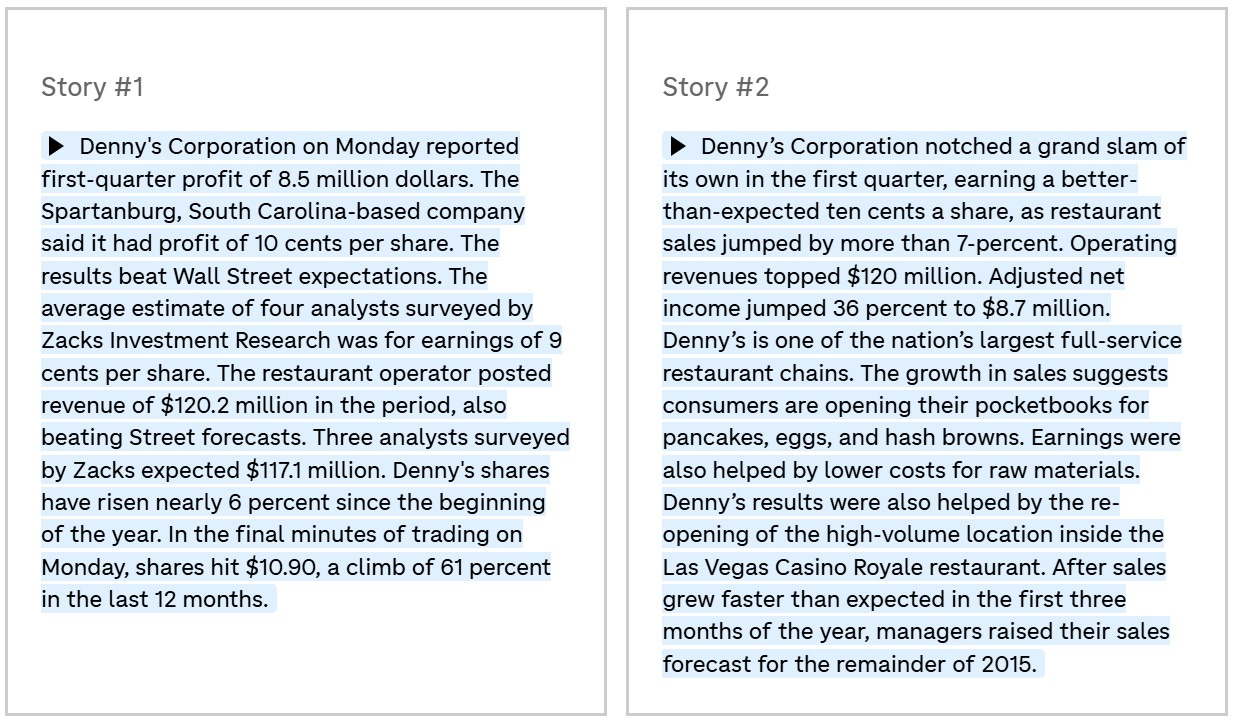

In May 2015, NPR White House correspondent and former business journalist Scott Horsley took on the WordSmith algorithm created by Automated Insights. “We wanted to know: How would NPR’s best stack up against the machine?” NPR wrote. Since NPR is a radio network, a human reporter working there is expected to be very good at fast reporting. Under the rules of the test, both competitors waited for Denny’s, the restaurant chain, to release its earnings report. Scott seemed to have an advantage—he was a Denny’s regular. He even had a regular waitress there, Genevieve, who knew his favorite order: Moons Over My Hammy. It didn’t help… though that depends on how you judge the results.

The robot completed the task in two minutes. It took Scott Horsley a bit more than seven minutes to finish. NPR published both news pieces to offer readers a sort of journalistic “Turing test” (again, it was 2015, 11 years before The New York Times quiz and 7 years before ChatGPT). Can you identify which piece was written by a robot and which one by a human?

The piece on the left was written by the robot. It contains more numbers and uses a drier style. Scott, by contrast, added some unnecessary detail to his version of the financial report, for example with this sentence: “the growth in sales suggests consumers are opening their pocketbooks for pancakes, eggs and hash browns.”

Technically, the robot’s vocabulary is larger because it contains the whole language. But it has to rely on the most relevant and conventional words, the ones with high frequency, and that eventually makes its style dry. In addition, its vocabulary is restricted by its specialized task. For example, a robot would not use culinary or sports terms like “hash browns” or “grand slam” in a financial report. Why would it? That lies outside the program.

Humans behave in the exact opposite way in this regard. A human writer is not limited by word frequency or strict relevance and can freely use rare or colorful words. This broadens the context and makes the writing more vivid. An original, unconventional word use is also what makes a writer’s style. Human writers even feel compelled to use unusual words, because self-expression is part of our social “program.” Robots, by contrast, have no need for originality when producing a financial report.

“But that could change,” NPR suggests. If the owner feeds WordSmith more varied NPR stories and adjusts the algorithm to diversify its vocabulary, the program’s wording could become broader. These things can be modified with ease, unlike humans who require lengthy, if even achievable, learning to write.

So who won the competition? The robot wrote faster and in a more businesslike style. Scott Horsley sounded more “human” (no surprise), but he was slower. The audience for this writing is people in the financial industry. Is the lyrical aside about wallets and pancakes useful to them? As long as the readers are humans, not other robots, it might be.

Ultimately, it’s a tie. Although two minutes versus seven may be critical for radio and for the financial news market.

Academics also set up a competition between a horse and a steam locomotive. Christer Clerwall, a media and communications professor from Karlstad, Sweden, asked 46 students to read two reports. One was written by a robot and the other by a human. The human story was shortened to match the length of the robot’s. The robot story was lightly edited by a human so that its headline, lead, and opening paragraphs followed the usual editorial style. The students then evaluated the stories on several criteria, including objectivity, trust, accuracy, boringness, interest, clarity, pleasure to read, usefulness, and coherence. (The research was conducted before 2014, the year of publication.)

The results showed that each story did better in different categories. The human story scored higher on “well-written,” “pleasant to read,” and similar qualities. The robot story scored higher on “objectivity,” “clear description,” “accuracy,” and others. So once again, humans and robots ended in a tie.

But the most important thing the Swedish study revealed is that the differences between the average text written by a human and one produced by a cyber-journalist may be negligible. This is a crucial point when assessing the future, and even the present, of robot journalism. Critics often say that robots cannot write better than humans. But that frames the issue the wrong way. “Maybe it doesn’t have to be better—how about ‘a good enough story’?” Professor Clerwall told Wired.

Three threats to journalism

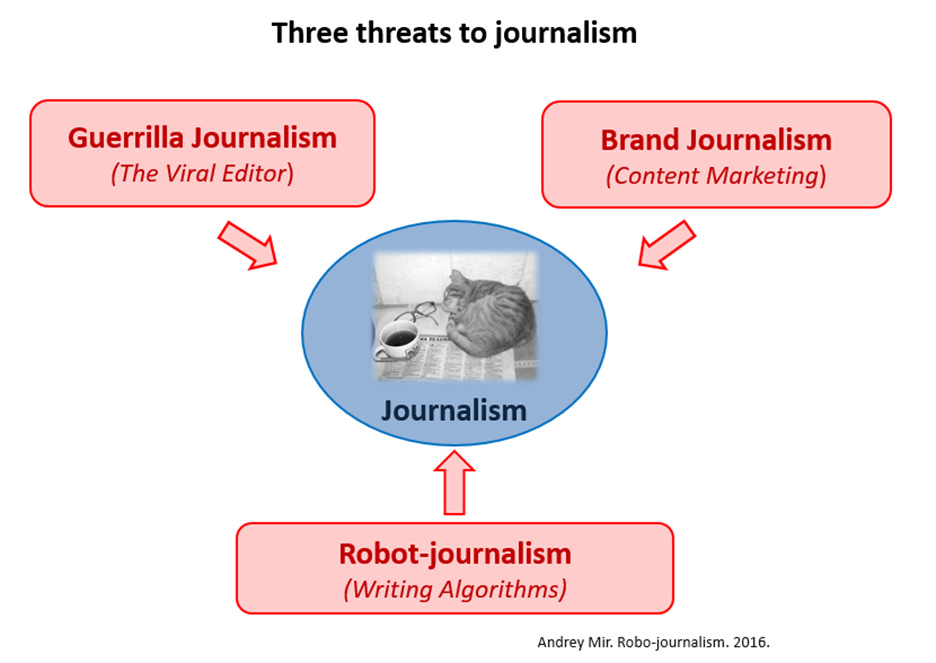

The internet emancipated authorship. Millions of people now inform each other about everything happening in the world. The surprising part is that, driven by enthusiasm, many do it for free. Yes, the internet contains a lot of rubbish, but people usually consume information that fits their interests. Content on the Web is not filtered before publication; it is filtered afterward, when it spreads, through the Viral Editor. As a result, legacy media lose their monopoly on shaping the news agenda. This is not only about the decline of newspapers, which is inevitable. The internet threatens old media not just because of the shift from print to digital, but also because the audience itself has become involved in news production and delivery.

Another threat to traditional journalism comes from content marketing. Corporations have also become authors, which means they rely less and less on traditional media as intermediaries. Companies can communicate directly. Brands have become media in their own right.

Content created by amateur writers, or bloggers, improves through cooperation (this is the Viral Editor). Corporations, by contrast, improve their media output through competition for public attention. In this media “arms race,” corporations attract professionals from media companies, adopt innovations, and most importantly shift from direct advertising to social agendas. Brands need an audience; advertising only pushes the audience away, while content marketing can gather it. Although the wider public hardly notices these processes, corporate media activity threatens traditional media just as much as the blogosphere does.

The blogosphere and corporate journalism are still human activities. But legacy journalism now faces a third threat, a soulless and inhuman one. Traditional media lose readers to the blogosphere and advertising to corporations, and their problems do not end there. People in the media may also lose their jobs to writing algorithms, this third threat.

Tired of competing with everything on the Internet, and now also threatened by algorithms, editors perceive this new threat with anxiety or sometimes rejection. Those more familiar with the issue usually say, “Okay, someday robots will write sports news, financial analysis, and weather reports. But they are incapable of anything else.”

That is the wrong take. Robots are already writing news about weather, sports, and finance. On a large scale, this is no longer about the future. The answer to the question of whether robots are capable of anything else is “yes.”

News stories about earthquakes and tectonic shift

This story went down in journalism history. On March 17, 2014, at 6:25 in the morning, journalist and programmer Ken Schwencke of The Los Angeles Times was jolted awake by an earth tremor. He rushed to his computer, where a news story written by the Quakebot algorithm was already waiting for him in the publishing system. Ken skimmed the report and pressed “Publish.” Thus, The Los Angeles Times became the first media outlet to report the earthquake, three minutes after the tremor. The robo-journalist outran its human colleagues.

The next Los Angeles Times earthquake report was published an hour after the second tremor. It is signed by Schwencke, but at the end there is a note: “This post was created by an algorithm written by the author.”

The Quakebot algorithm, written by Ken Schwencke, has been around for two years. It is connected to the U.S. Geological Survey and takes data directly from it: the location, time, and magnitude of an earthquake. It compares this information with records of previous earthquakes in the area and determines the event’s “historic significance.” The data is then placed into a standard template, and the news report is ready. The robot uploads it to the publishing system and sends a note to the editor. The report is far from Pulitzer-worthy, but it allows an editor to publish the news within minutes after it happens. Needless to say, earthquakes are major news in Los Angeles. The Los Angeles Times website even has a special “Earthquakes” section, which is filled by a trainee robot correspondent.

Another robot handles the crime reports at The Los Angeles Times. It has been producing the Homicide Report since 2007. When a coroner adds information to the database about a violent death, the robot collects the available data, places the case on a map, categorizes it by race, gender, cause of death, police involvement, and other factors, and publishes a report online. If the case seems important, a human journalist later gathers more information and writes a longer news story. If not, the robot’s report is all that appears on the website.

Media critics point out that crime coverage has changed with the arrival of robot reporters. In the past, journalists covered only the murders that seemed most significant. Now robots cover every murder. Looking at data on all homicides shows how they are distributed across different districts on a map, both overall and in categories such as gender and race. These visualized statistics produce “secondary” content that human reporting usually misses. The maps of homicides created by robots also have additional value, for example for the real estate market.

It is worth adding that a criminal reporting robot covers a territory of 10 million people – it’s comparable to the population of Sweden or Portugal. Certainly, a bio-journalist can’t make instant statistical calculations on such a scale.

In such cases, robots help journalists collect facts and do the first processing of data. Journalists then have more time for creative work. This is true. A crime-reporting robot does not really write, and neither does Quakebot, which simply uses preset phrases and templates. They are useful helpers for humans, nothing more.

The cases of financial and sports reporting are a bit more complicated.

Generative journalism

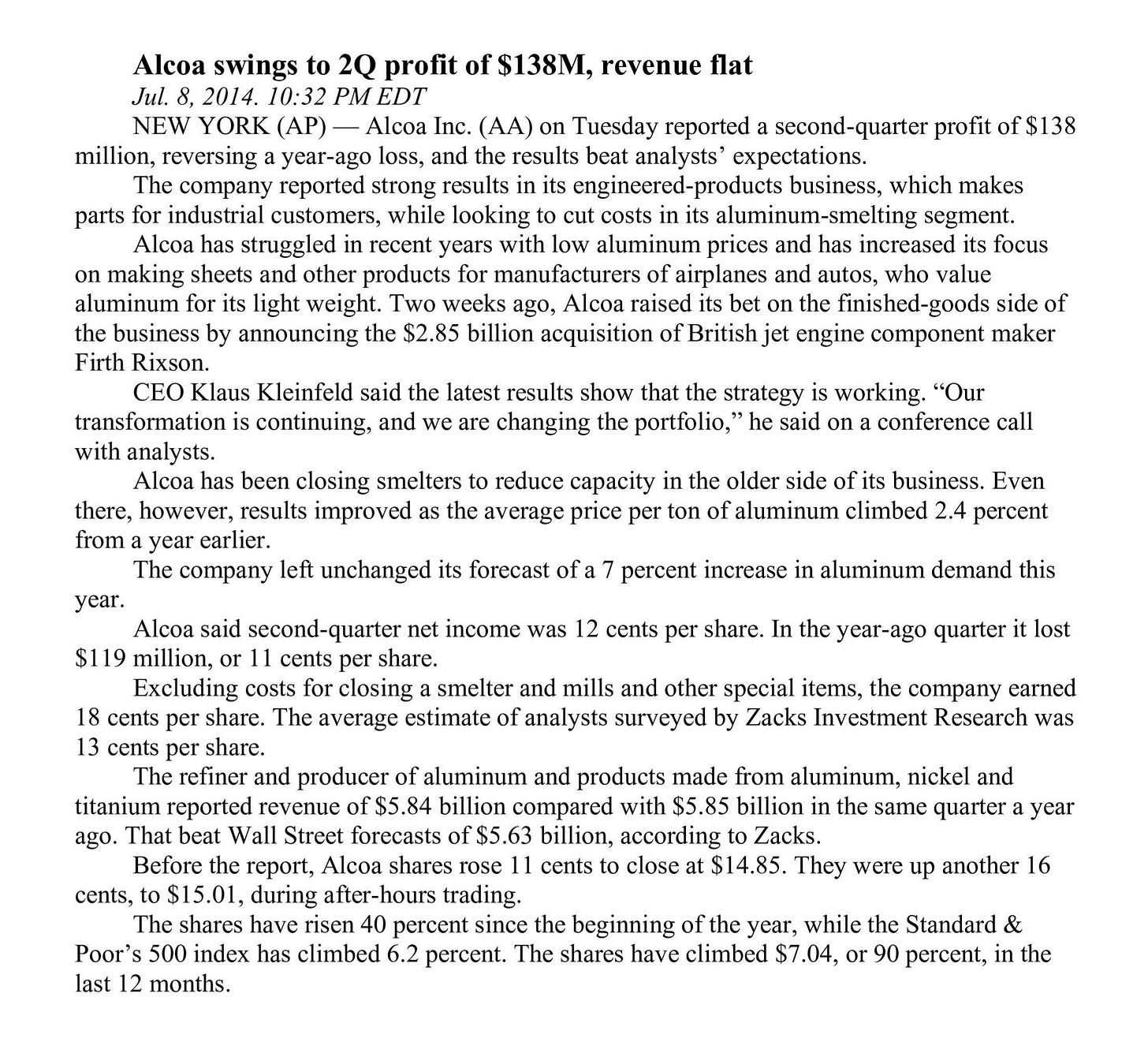

Here is a news story written by the WordSmith algorithm and published by the Associated Press (you don’t have to read it, just look at the quality of reporting).

This news report was produced in less than a second. The robot gathered the facts, compared the relevant market data, and generated a fairly detailed and coherent text.

Even more striking is that in 2014 the Associated Press published 3,000 WordSmith stories in a single quarter—about ten times more than AP journalists used to produce in the same period. By the way, robots are already entering the financial news market in some of the world’s leading media outlets. Another company, Narrative Science, which we might call a leader in generative journalism, provides its Quill robot service to Forbes.

Let’s look at sports reporting to see what is happening there. Here is a fragment of a kids’ baseball league game report written by the Stats Monkey algorithm, a system created by Narrative Science:

Friona fell 10-8 to Boys Ranch in five innings on Monday at Friona despite racking up seven hits and eight runs. Friona was led by a flawless day at the dish by Hunter Sundre, who went 2-2 against Boys Ranch pitching. Sundre singled in the third inning and tripled in the fourth inning … Friona piled up the steals, swiping eight bags in all …

Stats Monkey has a distinctive feature: it uses baseball slang. But that’s not all its benefits. During youth games, parents enter the results into a special iPhone app while the game is still in progress. Fans—the players’ relatives—receive a detailed report about the game even before the teams finish shaking hands on the field. Needless to say, for these fans such reports matter far more than a Super Cup report.

But there is even more to it. In 2011, an algorithm wrote 400,000 reports for the children’s league. In 2012, it wrote 1.5 million. For comparison, that year there were 35,000 journalists in the US. They would not be willing to cover Little League games, no matter how much they were offered. This reveals another aspect of robot journalism: algorithms can cover areas of journalism that human reporters skip because of “low newsworthiness.”

By the way

The digitalization of sports itself opens new possibilities for robot sports reporting. As Steven Levy wrote back in 2012 in an article for Wired, sports leagues now track every inch of the field and every player with cameras and sensors. Computers collect all kinds of data, such as ball speed, throw distance, leg movement, altitude, and other telemetry. A well-trained algorithm can detect when a pitcher’s throw weakens, or when a player starts leaning left before the batter makes a winning hit. Is this information important? Yes, but a human reporter would rarely notice it. Traditional sports journalism cannot capture this kind of detail, just as traditional crime reporting lacks interactive maps showing the distribution and density of crimes.

In other words, robots have already surpassed human reporters in data journalism, in speed, and in the scale of news coverage. But can they beat humans in writing style?

Two arguments for “robots’ inability”

The main argument against the future dominance of robots is that machines cannot create. Two ideas usually follow from this: a robot cannot invent like a human, and a robot cannot write like a human. Let’s look at them.

1) A robot can’t invent

YES. Serendipity is a human experience. A person can stumble upon an accidental invention or discovery for no clear reason—like when an apple falling on someone’s head, or a sudden brainwave. There is also the phenomenon of “eureka,” a moment of sudden insight that is hard to explain in logical terms. For this reason, many people believe that creative breakthroughs will always remain a human prerogative. Humans can act proactively and thrive on creativity, eureka moments, and serendipity. A robot’s actions, by contrast, are predetermined by an algorithm, at least as we understand them today.

That is why skeptics say robots will not be able, for example, to recognize the potential for a sensation in an event among many similar ones, as a human editor does. Moreover, a robot will not be able to decide to exaggerate an ordinary event into a sensation, as editors often do. How do you decide what to hype and what not? An editor knows; a robot doesn’t.

BUT. What if robots can do something humans can’t? They can cross-analyze enormous amounts of data and detect correlations. For example, analyzing consumer and political data might show that owners of red cars tended to vote for Bush. A human would struggle to find and explain such links. In a world of Big Data, cause-and-effect explanations are often unnecessary. Robots can discover striking correlations that matter for marketing, politics, and media. The world is full of them, but human journalists are often unable to see them.

What if an algorithm’s ability to come up with correlations compensates for and even replaces human serendipity, such as eureka moments and even the sense of humor? What if correlation is sometimes even more telling than causation? Facts derived from data may be as interesting and irrational as the outcomes of human creativity.

2) Robots don’t have a sense of style

YES. It’s true, robots don’t aim to write in a beautiful manner, and even if they had such a goal, what would be defined as «beauty»? What is it?

BUT. If beauty cannot be calculated, human reactions to it can. Humans themselves can serve as a measure of beauty for robots. Imagine that all texts and people’s reactions to them—likes, shares, comments, click-throughs—are collected in a robot journalist’s database. Even today, algorithms can identify which headlines, topics, and keywords attract attention by observing people’s reactions. Editors guess, robots know. Human crowd A/B testing replaces the algorithm’s own sense of style.

Now imagine that, with the help of biometrics—eye tracking already allows this—robots could analyze people’s physiological responses to specific words, idioms, epithets, syntactic constructions, and images. Such technologies are becoming more and more affordable; the only question is the amount of data and the processing speed.

Such systems can automatically produce more attractive headlines and texts. But new problems may arise: 1) attractiveness could turn into white noise; 2) the chase for human reactions could make generative journalism degenerative. Still, as long as this is a transitional period, the analysis of human reactions can compensate for robots’ lack of senses.

In other words, for every argument about what robots can’t do, there is an equally strong argument about what humans can’t do. In this competition of capabilities, robots and humans end up in a tie.

The competition has just begun, yet it is already a tie.

Roadmap for robot journalism

Based on what is already known about robot content creators, one can imagine how they are going to conquer the world. Or occupy the journalistic profession, for a start. Here are the key points of how robot journalism is going to operate in the future.

1) Big Data. Algorithms are designed to manage vast amounts of data. Their ability to detect correlations may in some ways replace human creativity. Many of the best human minds are now working on this problem—and they care little about saving journalism.

“If there is a free press, journalists are no longer in charge of it. Engineers who rarely think about journalism or cultural impact or democratic responsibility are making decisions every day that shape how news is created and disseminated,” said Emily Bell, professor at the Columbia Journalism School, in a speech whose title speaks for itself, “Silicon Valley and Journalism: Make up or Break up?”

2) Audience reaction analysis. By monitoring, collecting, and analyzing journalistic texts (syntax, vocabulary) together with people’s reactions to them, algorithms can calculate which texts and which features generate more likes, reads, reposts, and comments.

Some niches may remain where only humans do journalism. These will become a kind of sanctuary for bio-columnists. But on an industry scale, such niches that robots cannot cover will make little difference. Moreover, the share of human-made journalism will keep shrinking as the overall volume of robot-produced content continues to grow.

3) Biometrics. Once robots gain access to human nonverbal reactions and body language, they will be able to calculate subtle reactions instantly. Imagine someone reading a story about Trump: the touchscreen detects sweaty hands, the webcam registers dilated pupils, the microphone picks up faster breathing. This technology can replace an editor’s intuition. Applied to millions of people, it could also become the largest lie detector in history.

Technology is replacing humans with algorithms at a high speed. Why should they stop developing? It’s an open highway, leading to artificial intelligence, by the way.

Forecasts and suggestions

Co-founder and CTO of Narrative Science Kristian Hammond believes that 90% of news could be written by computers by 2030. Hammond also said that a computer could write a story worthy of a Pulitzer Prize by 2017.

I would add that we are entering both a quantitative and a qualitative competition with our cyber-colleagues. In the quantitative contest, bio-journalists have already lost. In the qualitative one, we may lose within five to seven years. (Can’t help but add with hindsight: ChatGPT arrived exactly seven years after that prediction. While a Pulitzer Prize for a robo-journalist hasn’t happened yet. - AM from 2026.)

It is interesting that in the early stage of the transition from human journalism to robot journalism, editors themselves will be the ones to kill the profession. Newsrooms have to produce as much content as possible to generate traffic. Journalists often have no time for serious topics; instead, they are pushed to produce more and more stories for the website. It becomes motion for motion’s sake. Journalism theorist Dean Starkman called this effect the “hamsterization of journalism,” or the Hamster Wheel. Hamsterization reduces the time journalists spend on each story so they can produce more stories—“do more with less.”

Let’s imagine that a good article, which means good journalism, can attract thousands of readers. But what if a thousand stories produced in the same time bring a hundred readers each? When traffic is king, editors don’t need the best journalists; they need fast journalists. They need churn, they need churnalists. Who will an editor choose—a prima donna journalist with rising salary demands and three stories a month, or a faultless algorithm with ever-lower maintenance costs and three stories a minute?

The Associated Press bought the WordSmith service not because the algorithm writes better than humans do. The reason is simple: it writes more and faster. Debates about text quality miss the point. Robots will conquer newsrooms not for belletristic reasons, but for economic and, paradoxically, “professional” ones.

Thus, the robots’ advent is unstoppable. Under these conditions, the most beneficial strategy for newsrooms is to be among the first at the beginning of robotization and among the last at the end of it. Using algorithms to generate texts can be an effective PR strategy today, attractive to both audiences and investors. But when algorithms flood the market, a rare human voice will be in demand amid the robots’ metallic squeak.

In this sense, strange as it may sound, human journalism may become especially valued in the final stage of media robotization. Moreover, editorial mistakes may become particularly valued and even attractive. That will last at least until robots learn to simulate editorial mistakes too.

In 2014, Wordsmith, one of the two most powerful news-writing algorithms, wrote and published one billion news stories. That may be as many as, or even more than, all human journalists produced that year. Part of that billion simply expanded the overall volume of content. The rest already replaced work that would otherwise have been done by humans.

The market is going to demand more and more. Nothing can stop robots from writing as much as they are required to, since their only limit is the volume that people can read. Even this limit will disappear once the readers are also robots.

See also books by Andrey Mir:

The Viral Inquisitor and other essays on postjournalism and media ecology (2024)

Digital Future in the Rearview Mirror: Jaspers’ Axial Age and Logan’s Alphabet Effect (2024)

[1] The extended version of this essay was also published in 2018: “AI to Bypass Creativity. Will Robots Replace Journalists? (The Answer Is “Yes”).” Information, 2018, 9(7), 183.